Hypothesis Testing: Mood's Median Test

What is Mood's Median Test?

Mood's median test is an inferential technique to assess two competing hypotheses about the population medians across k groups. Specifically, Mood's median test assesses whether the k population medians are equal. If there is significant evidence that the population medians are different, we want to conduct post hoc testing to investigate where the medians are different.

Mood's median test evaluates the equality of k population medians based on observed data assuming that the observations are representative of the population of interest and independent. Further, the data must be continuous for the test statistic to be appropriate.

Mood's median test compares the observed data with what we expect under the null hypothesis: all group medians are equal. The resulting p-value tells us how likely it is to observe the evidence we have for the alternative hypothesis or more when the null hypothesis is true. If the p-value is less than the specified significance level (e.g., less than 0.05), we reject the null hypothesis in favor of the alternate hypothesis. In that case, Mood's median test indicates that we have significant evidence that at least one population median is different. Otherwise, we fail to reject the null hypothesis, indicating we do not have significant evidence that at least one population median is different.

If Mood's median test result is significant, we can conduct post hoc tests to investigate which medians differ. The post hoc test for Mood's median test is pairwise Mood's median tests, and we compute pairwise bootstrap confidence intervals. We correct for multiple simultaneous inferences by applying a Bonferroni or Benjamini-Hochberg correction.

How to use this app?

Step 1: To use this app, go to the Dataset and Hypothesis Tab and upload your .csv type dataset.

Step 2: You can check the assumptions provided in the 'Summary & Assumptions Check' tab. .

Step 3: You can check the result of the Mood's median test (test statistics, decision making, and test visualization) in the 'Hypothesis Test' tab.

Step 3 (Optional): If the hypothesis test produces a significant result, you can view the results of the appropriate post hoc procedures in the 'Post Hoc' tab.

Contact us

Please contact us if you have any questions at datascience@colgate.edu.

Example 1

Within the Mood's median test app, we provide the penguin data that includes measurements for penguin species inhabiting islands in Palmer Archipelago and made available through the palmerpenguins library for R (Gorman et al., 2014). Suppose researchers aimed to evaluate whether species of penguins (Adelie, Chinstrap, and Gentoo) have differing median flipper lengths (mm).

Here, we have three samples of observations (the species) and a continuous attribute (flipper length). We will use Mood's median test to evaluate whether the data support the claim that at least one species has a different population median flipper length.

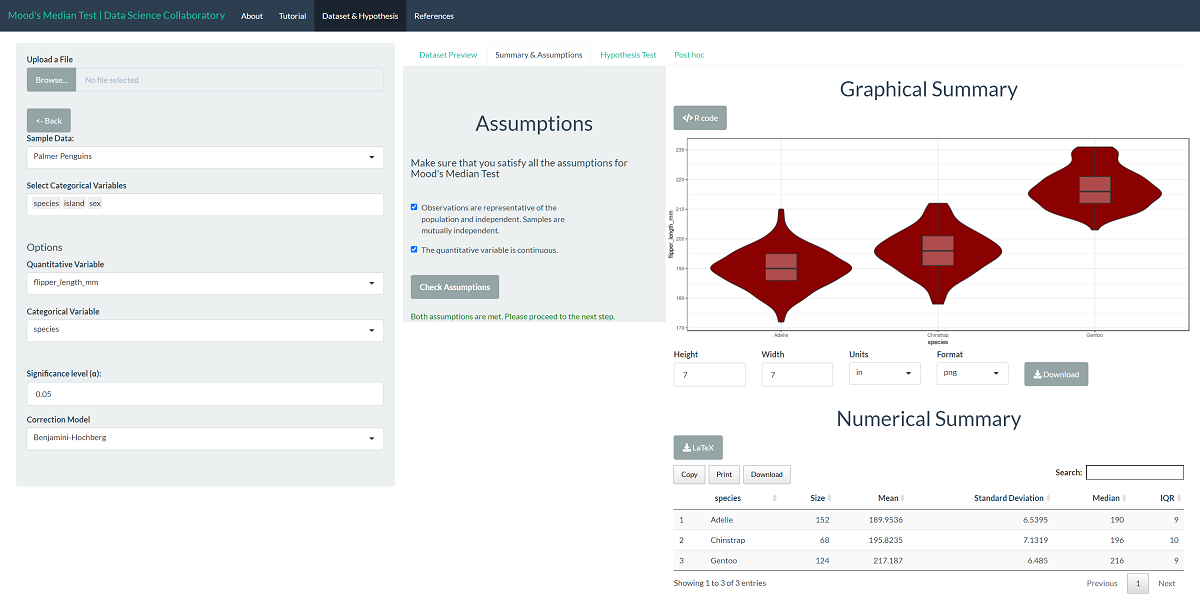

First, we load the Mood's median test app. Second, we click 'Sample dataset' to load the penguin data. Once the data are loaded, we select the variable (flipper_length_mm) and the sample (species). The data summary provides our first look at the data.

This plot shows that the data are roughly normally distributed as the densities are symmetric and bell-shaped. Unlike the ANOVA test, we don't have to check whether the variances are similar or the data are normally distributed. Still, we note that evaluating whether the observations are representative of the population of interest and independent is more challenging. These data were collected from many penguin nests across three different islands in Palmer Archipelago, meaning the data are likely representative. We trust that the researchers collected data in a way that made the observations near independent.

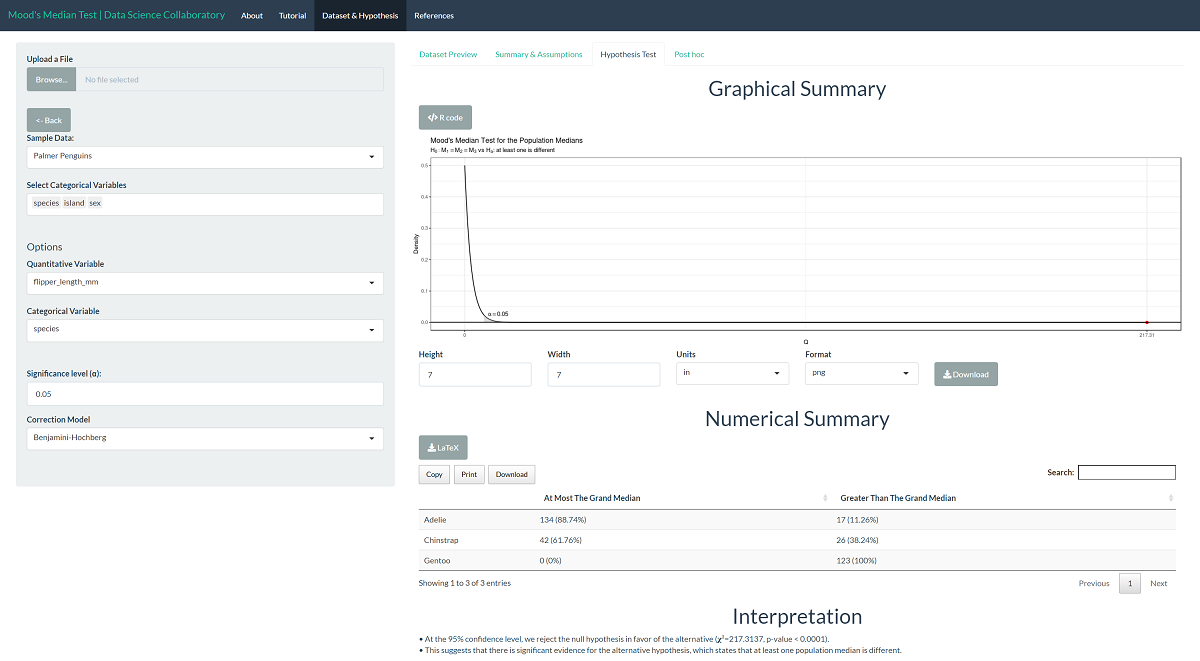

The 'Hypothesis Test' tab shows the result of Mood's median test. As we might expect after observing the data summaries, there is significant evidence that the population median flipper lengths (mm) differ across species (𝛘²=217.3137, p<0.0001). This tells us that at least one population median is different, but not which population medians or in what direction.

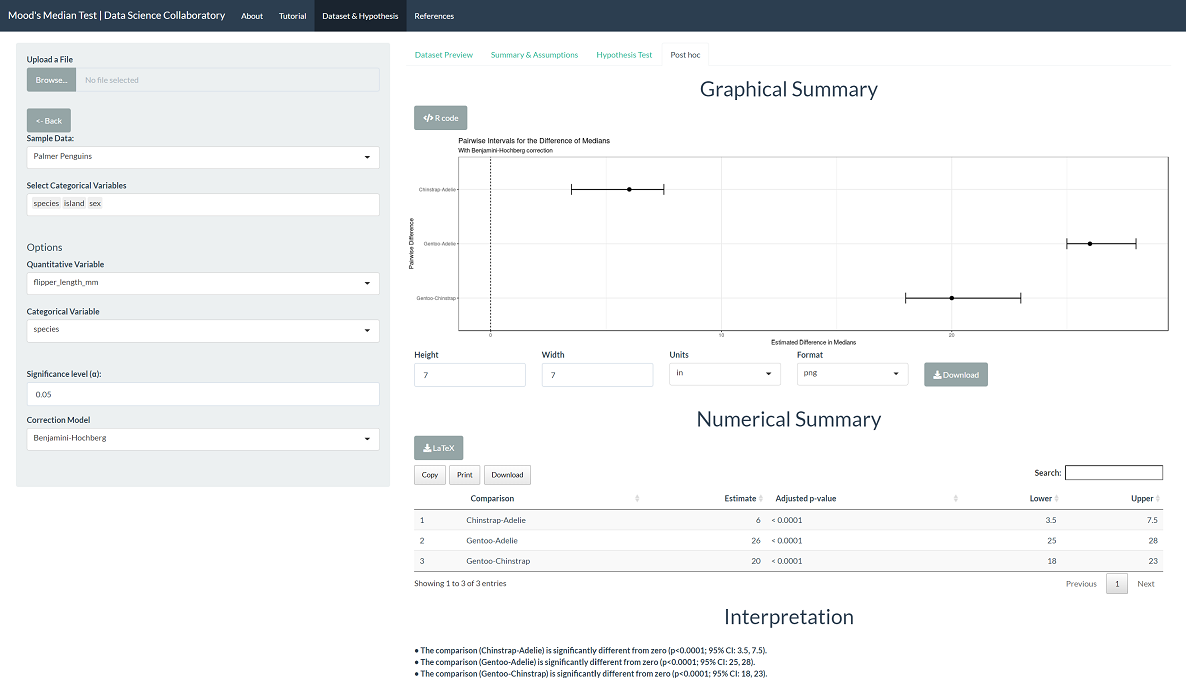

To evaluate differences among the populations, click 'Post Hoc'. All differences are statistically significant, with p-values less than 0.0001. This is not all that surprising. The data summaries show that Gentoo penguins have visibly different flipper lengths (mm). While Chinstrap and Adelie are closer in flipper length (mm), they are still significantly different based on our observations.

Gorman KB, Williams TD, Fraser WR (2014) Ecological Sexual Dimorphism and Environmental Variability within a Community of Antarctic Penguins (Genus Pygoscelis). PLoS ONE 9(3): e90081. doi:10.1371/journal.pone.0090081

Example 2

Within the Mood median test app, we provide the MFAP4 data, including measurements for Hepatitis C patients collected by the German network of Excellence for Viral Hepatitis and studied by Bracht et al. (2016). These researchers aimed to evaluate whether the human microfibrillar-associated protein 4 (MFAP4, U/ml) varies across the disease stages of Hepatitis C (0, 1, 2, 3, 4). The researchers can use Mood's median test to evaluate MFAP4 as a biomarker for disease stages of Hepatitis C.

Here, we have five samples of observations (the disease stages) and a continuous attribute (MFAP4 U/ml). We will use Mood's median test to evaluate whether the data support the claim that the MFAP4 levels vary across disease stages; i.e., at least one disease stage has a different population median MFAP U/ml.

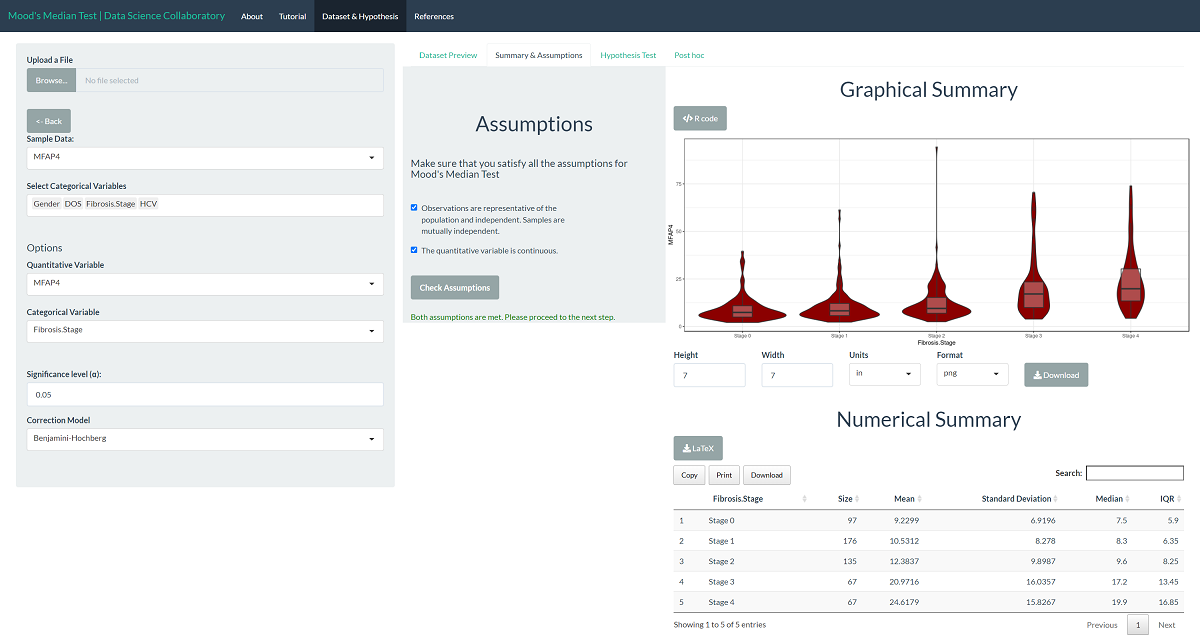

First, we load the Mood's median test app. Second, we click 'Sample dataset' to load the MFAP4 data. Once the data are loaded, we select the variable (MFAP4) and the sample (Fibrosis.Stage). The data summary provides our first look at the data.

At this point, we observed the data are heavily skewed, and the variances differ. Unlike the ANOVA test, we don't have to check whether the variances are similar or the data are normally distributed. We note that evaluating whether the observations are representative of the population of interest and independent is more challenging. In their paper, Bracht et al. (2016) tell us these data were collected at different sites using a protocol meant to reduce bias, meaning the data are likely to be representative. We trust that the researchers collected data in a way that made the observations near independent.

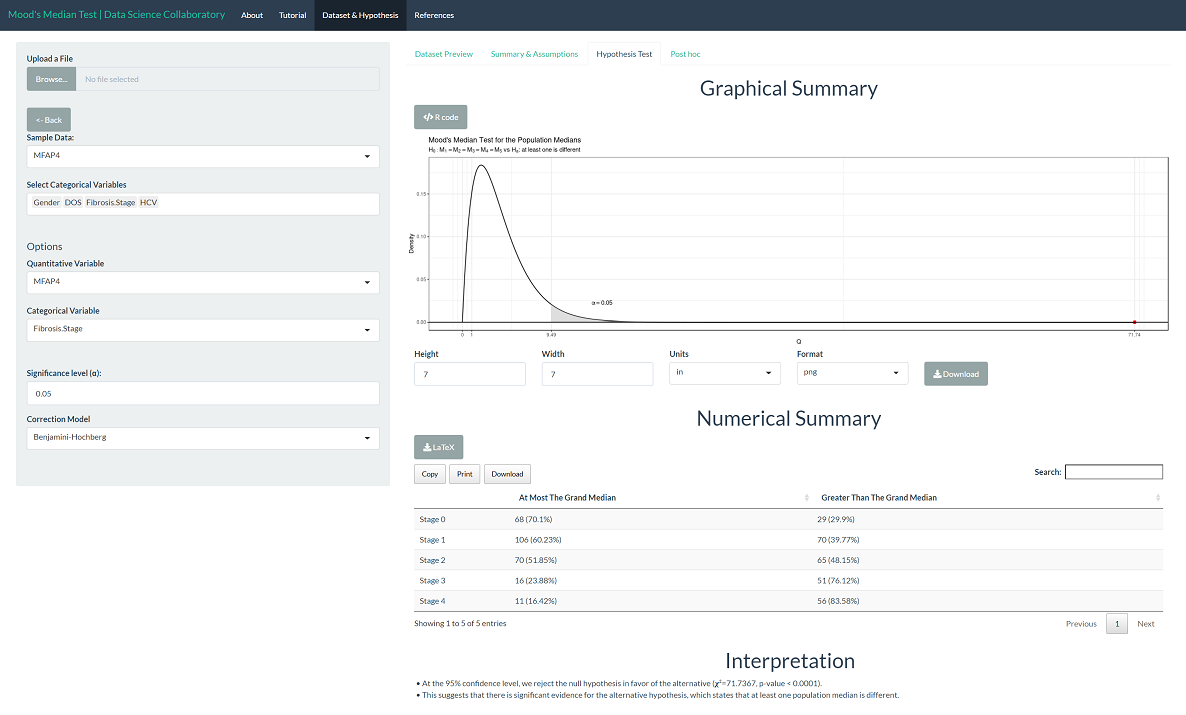

The 'Hypothesis Test' tab shows the result of the Mood's median test. As we might expect after observing the data summaries, there is significant evidence that the population median MFAP4 U/ml levels differ across disease stages (𝛘²=71.7367, p<0.0001). This tells us that at least one population median is different but not which population median(s) or in what direction.

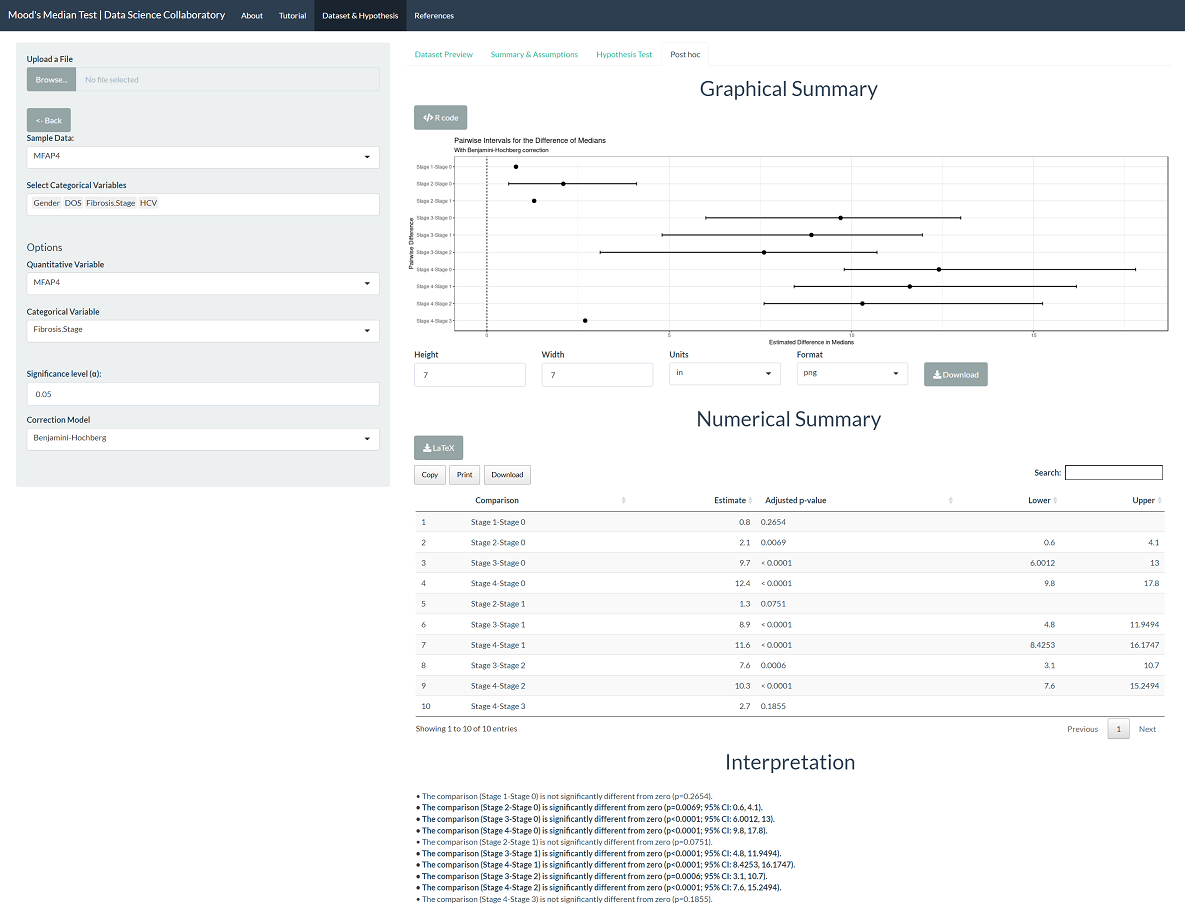

To evaluate differences among the populations, click 'Post Hoc'. We see that stage 0 is different from stages 2-4; stage 1 is different from stages 3-4; stage 2 is different from stages 0, 3-4; stage 3 is different from stages 0-2; and stage 4 is different from stages 0-2. That is, Mood's median test creates groupings (0-1), (1-2), and (3-4). Note that this result matches that of the ANOVA test, which Bracht et al. (2016) use to suggest that MFAP4 is a promising biomarker for the assessment of no to moderate hepatic fibrosis stages (0-2) from patients with severe fibrosis and cirrhosis (3-4).

Bracht, T., Molleken, C., Ahrens, M., Poschmann, G., Schlosser, A., Eisenacher, M., ... & Sitek, B. (2016). Evaluation of the biomarker candidate MFAP4 for non-invasive assessment of hepatic fibrosis in hepatitis C patients. Journal of Translational Medicine, 14(1), 1-9.

Example 3

Within the Mood's median test app, we provide U.S. News and World Report's College Data that includes measurements for many U.S. Colleges from the 1995 issue of U.S. News and World Report and made available through the ISLR library in R (James et al., 2017). Suppose we aimed to evaluate whether alumni donate at different rates at private and public colleges and universities.

Here, we have two samples of observations (private/public) and a discrete attribute (percent of alumni who donate). We will use Mood's median test to evaluate whether the data support the claim that there is a difference in the median percent of alumni who donate across types of colleges and universities.

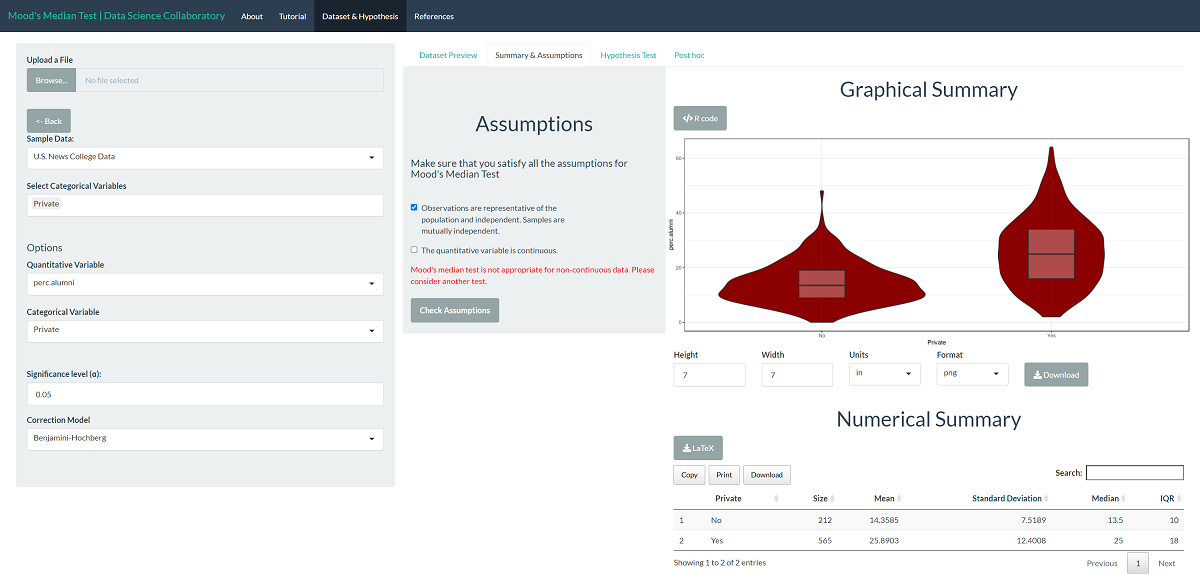

First, we load the Mood's median test app. Second, we click 'Sample dataset' to load the U.S. News College data. Once the data are loaded, we select the variable (perc.alumni) and the samples (private). The data summary provides our first look at the data.

We note that our data is discrete. There are only 61 unique observations for the percentage of alumni donating among 777 institutions. In fact, the median is 21 percent, and 20 institutions report this value. In this case, continuing with Mood's median test is inappropriate. We would recommend choosing a different test for these data. Similarly, the Kruskal-Wallis Test test can suffer when there are a substantial number of ties, but it performs reasonably with a moderate or low number of ties. Further, while ANOVA tests require normality, when the number of unique observations is moderately high and roughly bell-shaped, we can proceed with them due to their robust nature.

Gareth James, Daniela Witten, Trevor Hastie and Rob Tibshirani (2017). ISLR: Data for an Introduction to Statistical Learning with Applications in R. R package version 1.2. https://CRAN.R-project.org/package=ISLR